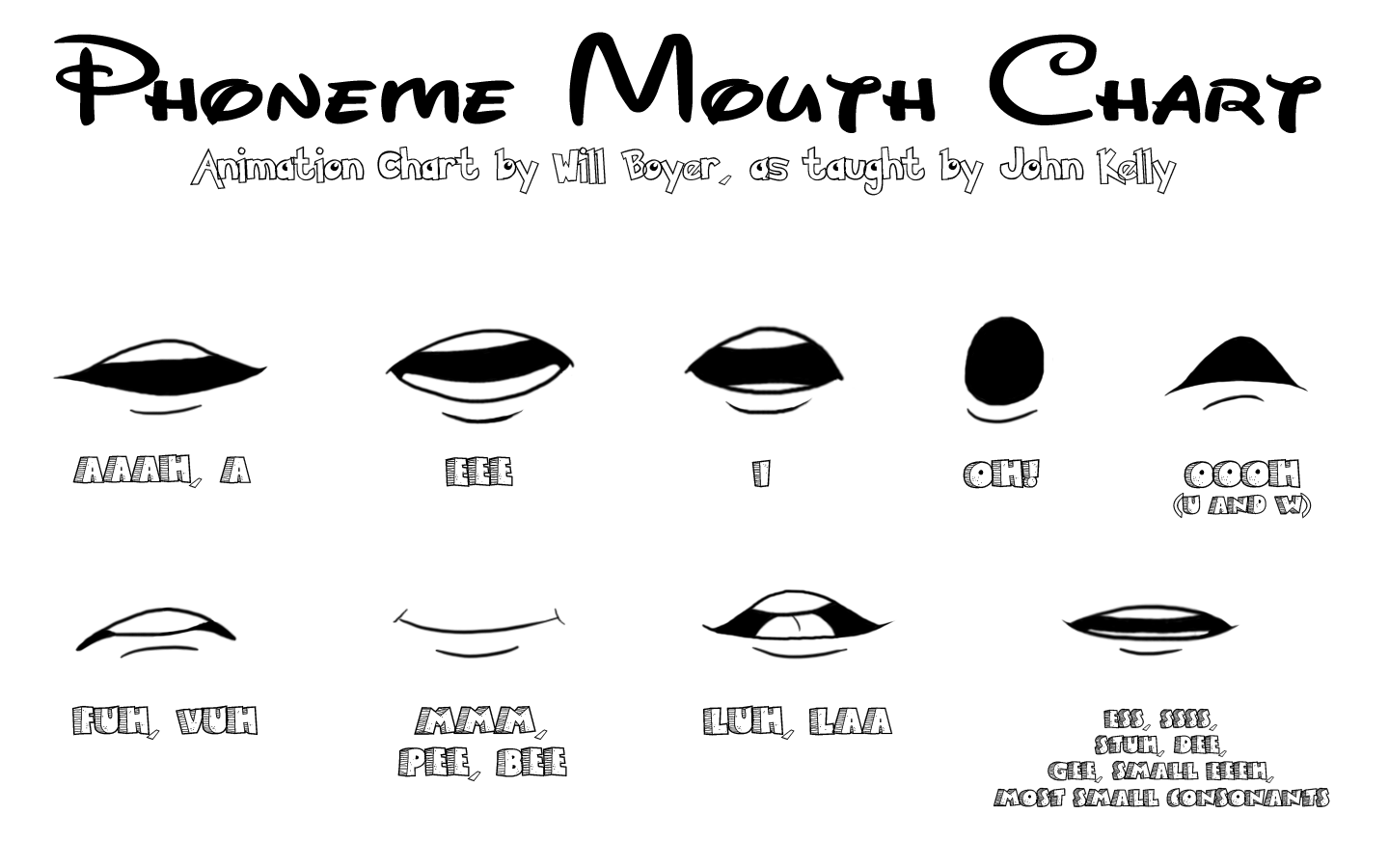

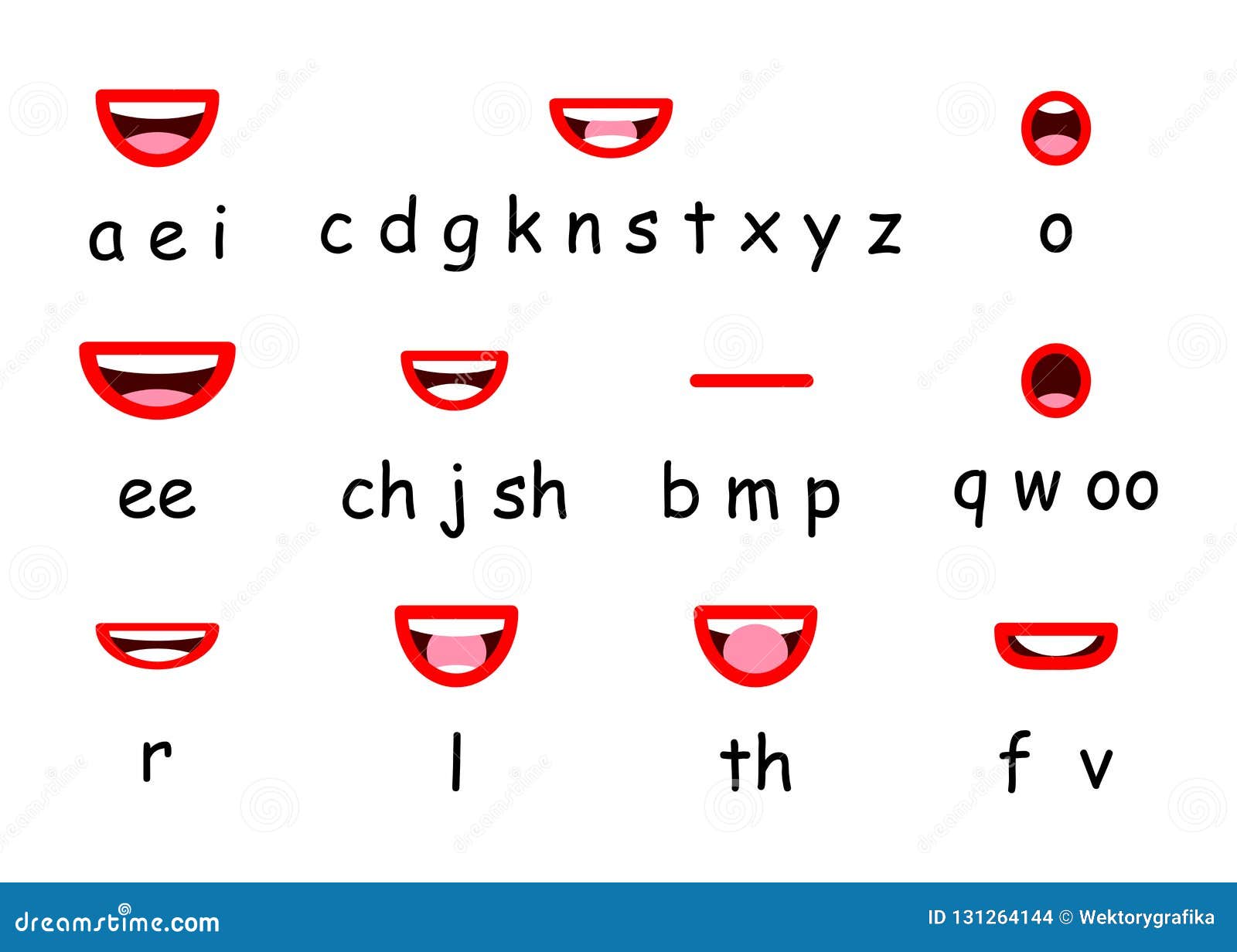

As a result, the most critical type of performance-to-animation mapping for live animation is lip sync - transforming anĪctor’s speech into corresponding mouth movements in the animated character.Ĭonvincing lip sync allows the character to embody the live performance, while Performers expressive control over the animation, the dominant component ofĪlmost every live animation performance is speech in all the examples mentionedĪbove, live animated characters spend most of their time talking with otherĪctors or the audience. uses face tracking to translate a performer’s facial expressions to a cartoonĬharacter and keyboard shortcuts to enable explicit triggering of animatedĪctions, like hand gestures or costume changes. ForĮxample, Adobe Character Animator (Ch) - the predominant live 2D animation tool Human actor and map it to corresponding animation events in real time. Streamers have started using live animated 2D avatars in their shows.Įnabling live animation requires a system that can capture the performance of a In addition to theseīig budget, high-profile use cases, many independent podcasters and game Sessions with their fans on YouTube and Facebook Live. The Forces of Evil, My Little Pony, cartoon Mr. Of The Simpsons, Archer talking to a live audience at ComicCon ,Īnd the stars of animated shows (e.g., Disney’s Star vs. Show, Homer answering phone-in questions from viewers during a segment RecentĮxamples from major studios include Stephen Colbert interviewing cartoon guests on The Late

Performers control cartoon characters in real-time, allowing them to interactĪnd improvise directly with other actors and the audience. However, live 2D animation has recently emerged as a powerful new way toĬommunicate and convey ideas with animated characters. Keyframes and motion curves that define how characters and objects move. Workflows for creating such animations are highly labor-intensive animatorsĮither draw every frame by hand (as in classical animation) or manually specify Video summary and supplementary results at GitHub link: įor decades, 2D animation has been a popular storytelling medium across manyĭomains, including entertainment, advertising and education. Over several competing methods, including those that only support offline Procedure that allows us to achieve good results with a very smallĪmount of hand-animated training data (13-20 minutes).Įxtensive human judgement experiments show that our results are preferred Of lookahead to produce accurate lip sync. Our contributions include specific design decisions for our featureĭefinition and LSTM configuration that provide a small but useful amount With less than 200ms of latency (including processing time).

Our system takes streaming audio as input and produces viseme sequences Generates live lip sync for layered 2D characters using a Long Short In this work, we present a deep learning based interactive system that automatically A key requirement for live animationĪllows characters to respond naturally to other actors or theĪudience through the voice of a human performer. The emergence of commercial tools for real-time performance-basedĢD animation has enabled 2D characters to appear on live broadcastsĪnd streaming platforms.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed